How Multi-Sensor Fusion Powers Fleet Data Capture

Mar 23, 2026 Resolute Dynamics

Modern fleet vehicles generate enormous amounts of data every second. Cameras capture visual context around the vehicle, radar detects nearby objects, GNSS provides location and speed information, and the vehicle’s CAN bus reports internal telemetry such as braking, acceleration, and engine state.

Individually, these sensors provide useful signals. But on their own they only offer partial insight into what is happening on the road.

To build reliable fleet intelligence systems, these signals must be combined into a unified data pipeline. This is where multi-sensor fusion architecture becomes essential.

Sensor fusion allows fleet platforms to correlate environmental data with vehicle telemetry, producing a far more accurate representation of driving conditions, vehicle behavior, and potential safety risks.

For fleet engineers and system architects, designing this architecture involves solving several challenges:

-

integrating sensors with different data formats

-

synchronizing timestamps across devices

-

processing large volumes of video and telemetry data

-

transmitting relevant insights to cloud platforms

This guide explains how modern fleet systems combine camera, radar, GNSS, and CAN bus data into a unified onboard data stream, and how that architecture enables safer and more intelligent fleet operations.

What Is Multi-Sensor Fusion in Fleet Telematics?

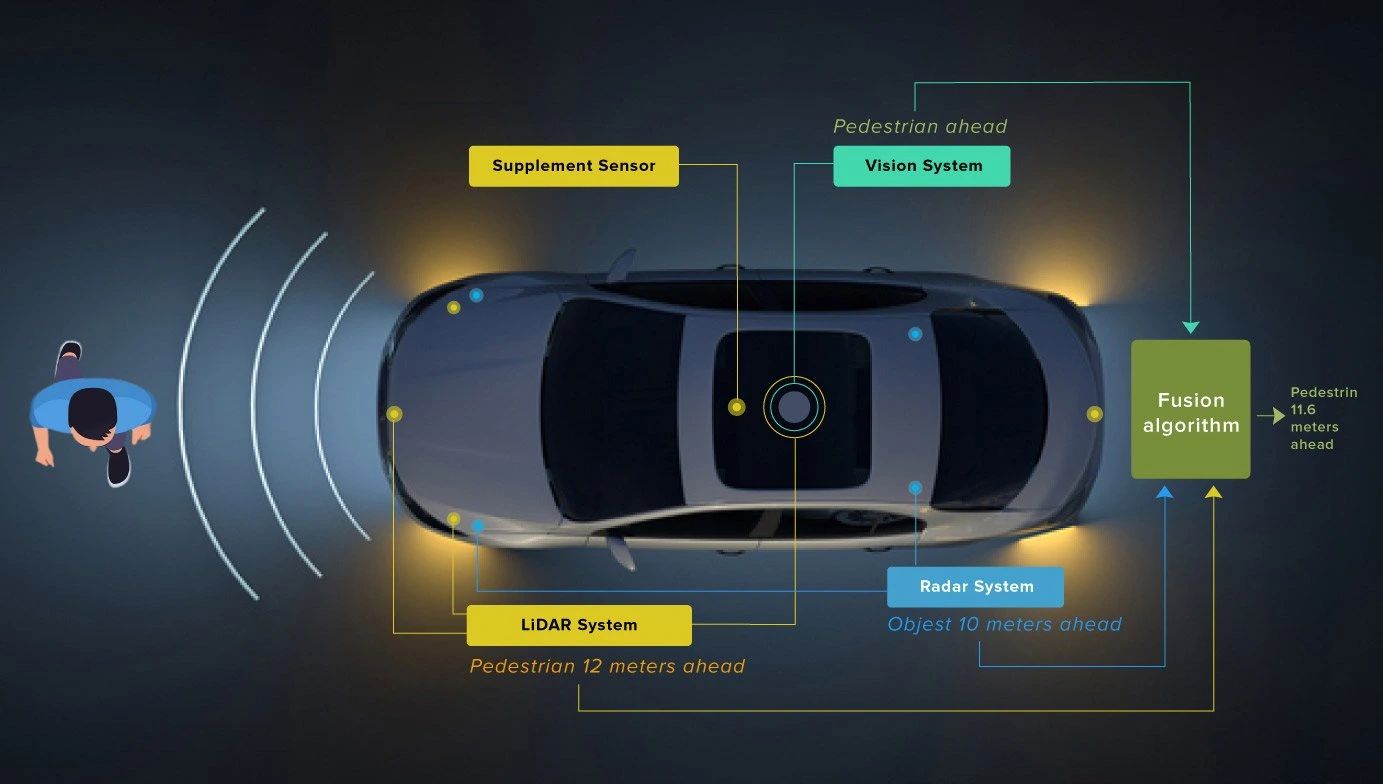

Multi-sensor fusion is the process of combining data from multiple sensors to produce a single, consistent understanding of a vehicle’s state and environment.

In fleet telematics systems, this means merging signals from sensors that observe different aspects of the driving environment.

Typical examples include:

-

Cameras detecting lane markings and traffic signs

-

Radar measuring the distance and velocity of nearby objects

-

GNSS determining vehicle location and route

-

CAN bus data reporting driver inputs and vehicle performance

By correlating these data streams, fleet platforms can build context-aware driving insights rather than isolated sensor readings.

For example:

A radar sensor may detect an object ahead.

A camera confirms that the object is a vehicle.

CAN data indicates that the driver is accelerating.

GNSS confirms the vehicle is approaching an intersection.

Together, these signals provide a far more complete picture than any single sensor could deliver.

Why Single-Sensor Data Is Not Enough

Fleet safety systems that rely on only one sensor type are inherently limited. Each sensing technology has strengths and weaknesses.

Cameras

Vision systems provide rich environmental context but struggle in:

-

poor lighting

-

fog

-

heavy rain

-

glare

Radar

Radar sensors excel at detecting distance and velocity but cannot easily classify objects.

GNSS

GNSS provides reliable location data but cannot describe the surrounding environment.

CAN Bus

Vehicle telemetry from the CAN bus reports how the vehicle is behaving but not what is happening around it.

Because of these limitations, multi-sensor systems dramatically improve reliability.

If one sensor is affected by weather or environmental conditions, other sensors can compensate. This redundancy is particularly important in fleet safety systems and Advanced Driver Assistance Systems (ADAS).

Core Sensors Used in Fleet Data Capture Systems

Most modern fleet platforms rely on four primary data sources.

Camera Systems

Cameras provide the visual foundation of many fleet intelligence platforms.

They are used for:

-

road scene monitoring

-

traffic sign recognition

-

lane detection

-

driver monitoring

-

incident recording

Camera systems generate large amounts of data, often several megabytes per second. Because of this, edge processing is usually required to extract meaningful insights before transmitting data to cloud platforms.

Typical outputs include:

-

detected objects

-

lane boundaries

-

traffic sign classification

-

driver behavior events

Radar Sensors

Radar sensors measure distance and relative velocity using radio waves.

They are particularly effective in conditions where cameras struggle, such as:

-

nighttime driving

-

heavy rain

-

fog

-

dust

Radar is commonly used in:

-

forward collision warning systems

-

adaptive cruise control

-

blind spot monitoring

In fleet applications, radar data is often fused with camera perception to improve object detection accuracy.

GNSS Positioning

Global Navigation Satellite Systems (GNSS) provide precise location and velocity information.

Fleet platforms typically support multiple satellite constellations, including:

-

GPS

-

GLONASS

-

Galileo

-

BeiDou

GNSS enables several key fleet capabilities:

-

route tracking

-

geofencing

-

speed monitoring

-

trip reconstruction

When combined with CAN data, GNSS can also help verify driver compliance with speed limits or route restrictions.

CAN Bus Vehicle Data

The Controller Area Network (CAN bus) is the internal communication network used by vehicle components.

It provides access to a wide range of telemetry signals, including:

-

vehicle speed

-

throttle position

-

braking activity

-

engine RPM

-

steering angle

-

fuel consumption

For fleet operators, CAN data is critical for:

-

driver behavior analysis

-

predictive maintenance

-

compliance monitoring

-

operational efficiency tracking

When correlated with sensor data, CAN signals provide important context about how a vehicle is being driven.

Architecture of a Multi-Sensor Fleet Data Platform

Designing a multi-sensor fleet system typically involves several architectural layers.

1. Sensor Layer: Data Capture

At the base of the system is the sensor layer, where raw data is generated.

This layer includes devices such as:

-

forward-facing cameras

-

cabin cameras

-

radar modules

-

GNSS receivers

-

vehicle CAN interfaces

-

inertial measurement units (IMUs)

Each sensor produces data at different rates.

For example:

-

cameras may capture 30 frames per second

-

radar sensors may update at 10–20 Hz

-

CAN signals may transmit hundreds of messages per second

Managing these streams requires careful synchronization.

2. Edge Processing Layer

Because raw sensor data volumes are extremely large, most fleet platforms perform processing inside the vehicle.

Edge computing devices handle tasks such as:

-

video encoding

-

object detection

-

sensor calibration

-

timestamp alignment

-

event detection

This layer typically runs on embedded hardware platforms with AI acceleration capabilities.

Edge processing reduces the amount of data that must be transmitted to cloud systems while enabling real-time safety alerts.

3. Data Fusion Layer

The fusion layer is where sensor streams are combined into meaningful insights.

Several fusion strategies are commonly used.

Early Fusion

Raw sensor data is merged before feature extraction.

This approach can provide the most detailed insights but requires significant computational resources.

Mid-Level Fusion

Sensors are processed individually, and their extracted features are combined.

For example:

-

radar detects an object

-

camera identifies the object type

These features are fused to improve detection accuracy.

Late Fusion

Each sensor produces a decision or classification, and the results are combined.

This approach is simpler but may lose some contextual detail.

4. Unified Vehicle Data Stream

Once fused, sensor data can be packaged into a unified telemetry stream.

Examples of fused insights include:

| Insight | Sensor Combination |

|---|---|

| Collision warning | Radar + camera |

| Speed compliance | GNSS + CAN |

| Lane departure | Camera + steering angle |

| Harsh braking | Accelerometer + CAN |

These events can then be transmitted to fleet management platforms for monitoring and analytics.

The Importance of Time Synchronization

One of the most difficult challenges in multi-sensor architectures is time alignment.

Because sensors operate at different frequencies, their data must be synchronized to a common clock.

Without proper synchronization:

-

video frames may not align with radar readings

-

CAN signals may not match driver actions

-

incident analysis may become unreliable

Common synchronization techniques include:

-

GNSS time reference

-

Precision Time Protocol (PTP)

-

hardware timestamping

Accurate timestamps allow systems to correlate events across sensors with millisecond precision.

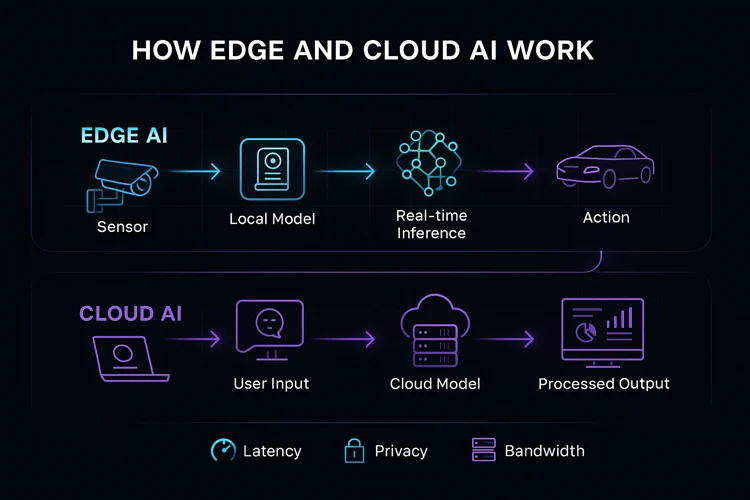

Edge vs Cloud Processing in Fleet Architectures

Fleet platforms typically divide processing between onboard systems and cloud infrastructure.

Edge Processing Advantages

Processing data inside the vehicle enables:

-

low-latency safety alerts

-

reduced network bandwidth usage

-

immediate event detection

For example, a collision warning system must respond instantly without relying on cloud connectivity.

Cloud Processing Advantages

Cloud systems are better suited for:

-

large-scale data analytics

-

model training

-

fleet-wide insights

-

historical analysis

Cloud platforms can aggregate data from thousands of vehicles to identify patterns and trends.

Hybrid Architectures

Most modern fleet platforms use a hybrid approach:

-

real-time processing occurs at the edge

-

deeper analytics occur in the cloud

This architecture balances responsiveness with scalability.

Designing Scalable Fleet Data Capture Systems

When deploying multi-sensor systems across large fleets, several design considerations become critical.

Data Bandwidth

Video and radar streams can generate massive data volumes.

Efficient compression and event filtering are necessary to control bandwidth usage.

Modular Sensor Interfaces

Fleet hardware must support multiple communication interfaces such as:

-

CAN

-

CAN FD

-

Automotive Ethernet

-

USB

-

serial interfaces

This flexibility allows systems to integrate with different vehicle models.

Storage and Event Logging

Local storage is often used to retain recent sensor data for incident investigation.

Event-based recording helps reduce storage requirements.

Over-the-Air Updates

Because sensor fusion algorithms evolve over time, systems must support remote firmware and software updates.

Practical Fleet Applications of Multi-Sensor Fusion

Multi-sensor architectures enable several high-value use cases.

Collision Detection

Combining radar and camera data significantly improves object detection reliability.

Radar measures distance and velocity, while cameras provide classification.

Intelligent Speed Assistance

Speed compliance systems can combine:

-

GNSS location

-

digital speed limit maps

-

traffic sign recognition

-

CAN bus vehicle speed

This allows fleets to automatically detect and report speeding violations.

Driver Behavior Monitoring

Sensor fusion can detect unsafe driving patterns by combining:

-

accelerometer signals

-

CAN telemetry

-

camera analysis

This helps fleets identify risky driving behaviors such as harsh braking or aggressive acceleration.

Incident Reconstruction

When incidents occur, fused sensor data provides a detailed timeline of events, including:

-

vehicle speed

-

surrounding traffic conditions

-

driver actions

-

precise location

This information is critical for safety investigations and insurance reporting.

Security and Data Integrity

Fleet sensor systems must also protect against data manipulation and cyber threats.

Potential risks include:

-

GPS spoofing

-

unauthorized device access

-

sensor data tampering

Mitigation strategies include:

-

encrypted telemetry transmission

-

secure device authentication

-

hardware security modules

-

digitally signed event logs

Strong security practices ensure the integrity of fleet data and safety system

A Capture → Connect → Control Approach to Fleet Intelligence

Many modern fleet platforms structure their architecture around three core layers.

Capture

Sensors collect environmental and vehicle data from the fleet.

Connect

Unified telemetry pipelines transmit data to fleet management platforms.

Control

Fleet systems analyze the data and trigger actions such as:

-

safety alerts

-

compliance reporting

-

operational insights

This layered architecture simplifies deployment while supporting scalable fleet intelligence.

Future Trends in Fleet Sensor Fusion

Fleet data systems continue to evolve rapidly as vehicles become more connected and autonomous.

Several trends are shaping the next generation of architectures.

Edge AI acceleration

More powerful onboard processors allow complex perception algorithms to run directly inside vehicles.

Vehicle-to-Everything (V2X)

Vehicles will increasingly exchange data with infrastructure and other vehicles.

Software-defined vehicles

Vehicle capabilities are increasingly controlled by software rather than hardware changes.

Autonomous fleet technologies

Advanced sensing and fusion systems will play a critical role in autonomous vehicle deployment.

Key Takeaways

Multi-sensor fusion is becoming a foundational capability for modern fleet platforms.

By combining camera, radar, GNSS, and CAN bus data, fleet systems can produce a comprehensive view of both vehicle behavior and the surrounding environment.

For fleet engineers and architects, the most effective systems rely on:

-

synchronized sensor data pipelines

-

edge processing for real-time insights

-

cloud platforms for large-scale analytics

-

modular architectures that scale across vehicle types

As fleets continue to adopt connected vehicle technologies, robust multi-sensor fusion architectures will play a central role in improving safety, efficiency, and operational visibility.