SOTIF Compliance in Fleet Speed Governance: Managing Safety of the Intended Functionality

Apr 30, 2026 Resolute Dynamics

TL;DR: SOTIF (ISO 21448) for fleet speed governance is about making sure your speed limiter or intelligent speed assistance behaves safely even when nothing is “broken.” It manages risks from sensor limitations, algorithm edge cases, and mis-specified scenarios across your entire fleet — which is why sensor accuracy feeds SOTIF assessment directly.

Key Takeaways

- SOTIF (ISO 21448) focuses on safety risks from intended functionality, not random hardware or software failures. For modern, software-defined speed governance systems that lean on sensors, maps, and complex logic, SOTIF is as critical as the ECU itself.

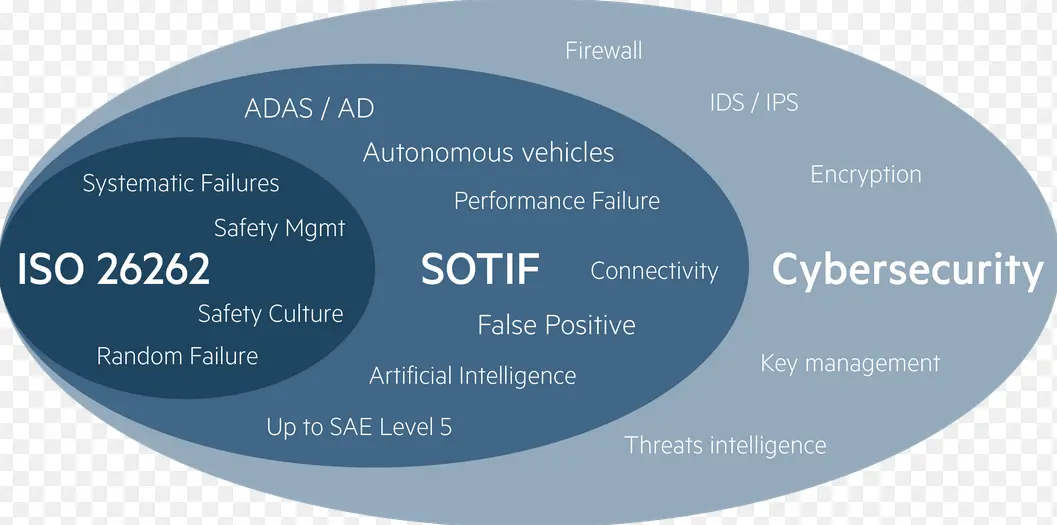

- ISO 26262 vs SOTIF: ISO 26262 addresses failures. SOTIF addresses misinterpretation, functional insufficiency, and scenario gaps. If you want robust fleet safety, you need both, but this page stays on the SOTIF side of the fence. For a deeper ISO 26262 comparison, see our companion guide.

- The four-area model (known/unknown, safe/unsafe) is a practical way to map out your speed governance scenarios and decide where to spend time on tests, simulation, and monitoring.

- Triggering conditions for SOTIF hazards in fleets include GPS outages, tunnels, degraded or occluded signs, stale map data, V2X spoofing, and messy real-world driver behavior that doesn’t match the design assumptions.

- A SOTIF validation framework for fleet-scale deployment blends scenario-based simulation, controlled pilots, large-scale field testing, and post-deployment monitoring into one continuous loop.

- Acceptance criteria and residual risk have to be spelled out. You’ll never show zero risk, but you can show that what’s left has been reduced, is understood, and is better than what you have without the system.

- Resolute Dynamics applies SOTIF to speed governance using sensor fusion in the Capture module, a growing triggering condition database, and continuous feedback from real fleet data.

- SOTIF responsibilities land on both OEM and aftermarket fleet solutions. Operators share the load through configuration, maintenance, driver training, and in-service monitoring.

What Is SOTIF in the Context of Fleet Speed Governance?

Short definition: SOTIF (Safety of the Intended Functionality), defined in ISO 21448, is a safety standard that deals with hazards that come up even when a system behaves exactly as designed. For fleet speed governance, SOTIF focuses on sensor performance limits, algorithm design, scenario coverage, and foreseeable misuse.

It does not deal with random hardware failures or classic software bugs, which are the domain of ISO 26262.

As fleets move from simple mechanical or ECU speed limiters to intelligent speed assistance, map-based limiters, and data-driven control strategies, SOTIF issues start to dominate. You can have a system that passes every ISO 26262 fault-injection test and still puts vehicles in unsafe speed zones because it misreads a sign, trusts a stale map tile, or gets used well outside its operational design domain (ODD).

What Is SOTIF and Why Does It Apply to Fleet Speed Governance?

SOTIF (ISO 21448) deals with safety risks that appear when the intended functionality is working “correctly” but still leads to unsafe behavior. That’s very different from ISO 26262, which focuses on what happens when the hardware or software fails. In speed governance, a lot of the real trouble comes from sensor limitations, algorithm edge cases, and context misinterpretation, not from outright faults.

Old-school speed limiters were easy to reason about. You picked a top speed and the box never let the truck past it. Modern fleet speed governance systems are more capable but also more fragile in subtle ways. They usually combine:

- Camera-based traffic sign recognition

- HD map and speed limit databases

- GPS/IMU localization

- V2X (vehicle-to-everything) messages

- Dynamic rules for weather, time of day, school zones, congestion, and internal fleet policies

None of those pieces has to fail for you to end up with a dangerous outcome. The system can be perfectly “healthy” in ISO 26262 terms and still:

- Show functional insufficiency, like a camera that handles normal daylight great but struggles badly with glare, fog, or a dirty lens, while the software continues to treat it as highly reliable.

- Expose specification insufficiency, where the ODD was scoped to certain road classes and regions, but in reality the same configuration gets pushed into very different environments.

- Hit sensor performance limitations, such as GNSS error in dense urban canyons that shifts a truck onto the wrong road segment and wrong speed zone.

- Run into foreseeable misuse, like drivers habitually overriding the system right at critical points, breaking the assumptions the designer made about how often and when overrides would occur.

These are SOTIF problems. If your fleet is banking on intelligent speed functions to cut speeding-related crashes, reduce claims, negotiate better insurance terms, or satisfy current and upcoming regulations (including UNECE and regional intelligent speed assistance requirements), you have to treat SOTIF with the same seriousness you give to functional safety and cybersecurity.

For deeper coverage of hardware and software failure modes, see our dedicated comparison guide: .

SOTIF vs ISO 26262: Understanding the Difference for Fleet Systems

ISO 26262 looks at random hardware failures and systematic software bugs. SOTIF looks at performance limits and blind spots of sensors and algorithms that are technically doing what they were designed to do. In fleet speed governance, ISO 26262 keeps the system from breaking. SOTIF keeps it from confidently doing the wrong thing.

In day-to-day engineering discussions, the split often sounds like this:

- ISO 26262 functional safety: “What if the ECU crashes mid-trip?” “What if a memory bit flips and corrupts the speed control logic?” The focus is on faults and failures and how the system reacts.

- ISO 21448 SOTIF: “What if road grime blinds the camera?” “What if the map thinks there’s a 90 km/h limit where the sign clearly says 60?” “What if the intervention strategy misreads a driver’s gentle braking as an agreement to speed up later?” Here the focus is on intended function under unanticipated or under-specified conditions.

For fleet speed governance systems, a typical ISO 26262 scope covers things like:

- Safe power-up and power-down of the speed control module, so you never get stuck in a half-initialized state.

- Mechanisms to detect internal computation errors and corrupt memory.

- Watchdogs, redundancy, and defined safe-state behavior if a fault is detected.

SOTIF, on the other hand, asks harder questions that sit much closer to real-world operation:

- Is the operational design domain (ODD) truly clear, including geography, road types, weather, lighting, and speed ranges where the function is supposed to be “on”?

- Do we understand where sensor performance limitations will show up, such as tunnels, heavy urban areas, night driving, rain, snow, or ongoing roadworks?

- Have we captured known safe scenarios and known unsafe scenarios, and do we have a process to actively hunt for unknown unsafe scenarios that we haven’t seen yet?

- Does the graduated intervention strategy still behave safely when you factor in how people actually drive, including edge cases like last-second lane changes, harsh braking, or mixed use of cruise control and limiters? Our graduated intervention SOTIF-safe guide breaks down each level.

ISO 26262 and SOTIF work together. They are complementary standards, not competing frameworks. Many fleets secure functional safety compliance for their ECUs and then find out that a big chunk of real incidents are driven by SOTIF-type failures: wrong speed zones applied, confusing warnings, or the system quietly running outside its intended ODD.

Our related article, , walks through how these standards line up across the development and operational lifecycle.

The Four-Area Model Applied to Fleet Speed Governance

Think of the four areas like a map of where your system behaves safely and where it bites you. You have known safe scenarios where you’ve shown the system behaves correctly. You have known unsafe ones where you already know it misbehaves. Then there are unknown safe and unknown unsafe pockets, where you simply haven’t tested or seen enough yet. Fleet operators should be pushing Area 1 to grow, keeping Area 2 under tight control, and shrinking Area 3 as aggressively as possible.

ISO 21448 describes a conceptual four-area model that fits speed governance very well. It classifies operating scenarios along two axes:

- Safe vs. unsafe outcome

- Known vs. unknown to the development team

For fleet speed governance, deliberately mapping scenarios into these areas gives you a shared language between safety engineers, operations, and management. It makes it much easier to decide where to invest in testing, enhanced data capture, or immediate mitigation.

Area 1 — Known Safe

Area 1 is the comfort zone. These are scenarios where you expect the system to behave safely and you’ve actually demonstrated that through analysis, simulation, testing, or a solid mix of all three.

Common Area 1 examples for speed governance include:

- A truck cruising on a clear motorway with accurate GPS and up-to-date maps, where the speed limiter cleanly maintains a configured cap below the posted limit.

- A camera correctly reading a 50 km/h sign, confirming it with the map database, then commanding a gradual deceleration from 70 to 50 km/h that feels natural to the driver.

- A short tunnel where GPS signal is lost, the system recognizes the degradation, freezes the last valid limit with a small safety margin, and shows the driver that confidence is temporarily reduced.

To build and grow Area 1 for your fleet, you typically rely on:

- A solid requirements and ODD specification that spells out where the function is intended to work and what’s out of bounds.

- Scenario-based testing across simulation and proving grounds, covering normal use plus stress conditions where you still expect the system to succeed.

- Verification and validation evidence, like coverage reports, pass/fail metrics, robustness checks, and targeted regression tests.

Over time, you want to keep pushing scenarios from “we think it’s fine” to “we’ve proven it’s fine.” That’s how you systematically grow Area 1 instead of relying on gut feel.

Area 2 — Known Unsafe

Area 2 is where you know you’re exposed. These are scenarios your engineering or operations teams already recognize as potentially unsafe for the intended function. For SOTIF compliance, simply being aware is not enough. You have to eliminate or mitigate these situations.

Some very typical Area 2 examples in fleet speed governance are:

- Dense “urban canyon” zones where reflected GPS signals regularly shift vehicles onto wrong lanes or parallel roads, pushing them into wrong speed zones.

- Regions with a lot of non-standard or temporary signs, such as roadworks or local variations, that your camera model doesn’t recognize reliably.

- Map tiles known to be out of date, for example, where speed limits were recently lowered but the backend data hasn’t caught up.

- Specific patterns of driver override behavior combined with automated interventions that end up temporarily lifting the vehicle above a safe speed in high-risk spots. We explore driver override as SOTIF scenario in detail in a separate ethics piece.

Mitigating Area 2 in fleets usually involves a mix of design and operational measures, such as:

- ODD restriction: explicitly disabling certain features in those problem locations or conditions, rather than pretending everything is fine.

- Degraded mode: applying larger safety margins, down-weighting unreliable sensors, or switching to advisory-only mode instead of direct control.

- Accelerated map data updates and tougher validation processes for high-risk segments.

- Targeted driver alerts and training that call out locations or situations where the system’s limitations are known.

From a SOTIF angle, leaving a known unsafe scenario in Area 2 without any mitigation is not acceptable. Once you’ve identified a hazard, ISO 21448 expects you to either tighten the ODD or change the system so the hazard is controlled or removed.

Area 3 — Unknown Unsafe

Area 3 is the one that keeps safety engineers awake. These are scenarios that lead to unsafe outcomes but that the team has not yet identified. Many of the most serious SOTIF incidents in the field come from here. They are the “we didn’t think that combination would ever happen” cases.

For fleet speed governance, Area 3 might contain situations like:

- Odd combinations of rain, low sun, lane markings, and awkward sign placement that cause your sensor fusion stack to misclassify the road or miss a speed change.

- Corner cases in graduated intervention logic where the mix of braking, accelerator input, or cruise control state leads the system to the wrong conclusion about driver intent.

- Mis-labeled or unvalidated V2X messages from infrastructure that look valid but describe the wrong limit or road section.

- New road types or traffic schemes introduced after your system was designed, such as smart corridors or variable limits, which never made it into the original ODD assumptions.

SOTIF pushes you to set up a systematic strategy to drag scenarios out of Area 3 and into visibility:

- Use scenario generation & variation in simulation to probe rare or extreme conditions instead of only running “happy path” tests.

- Run continuous field monitoring and data mining across the fleet to spot odd patterns in interventions, overrides, or speed excursions.

- Feed in driver incident reports, customer complaints, and helpdesk logs as SOTIF evidence, not just customer service noise.

- Perform cross-fleet analytics, since low-frequency, high-impact scenarios often only show up once you aggregate data from many vehicles and regions.

One big upside of managing speed governance at fleet scale is that you can actually do this. Once you have the pipeline, you can convert Area 3 unknowns into Area 2 known unsafe scenarios, then fix them and move them into Area 1. That’s how SOTIF maturity improves year after year.

Area 4 — Unknown Safe

Area 4 is where the system behaves safely, but you haven’t explicitly verified it yet. Nobody has written the test case, or the scenario hasn’t shown up in your data with enough volume to analyze. In reality, a lot of everyday driving starts here.

Examples for speed governance might include:

- A rare type of rural signage or regional variation that your camera and map logic handle just fine, even though it never made it into the original test catalog.

- Unexpected combinations of fleet-specific constraints, like heavy payload plus trailer plus steep grade, where your conservative logic still keeps speed and stopping distance well within safe limits.

Area 4 is less worrying than Area 3 because outcomes are safe, but it still matters for building confidence. Over time, your monitoring and analytics should reclassify some of these scenarios into Area 1, backed by concrete evidence rather than assumptions.

Resolute Dynamics uses this four-area mapping in its SOTIF area classification workflow. It gives a structured way to decide which issues deserve an immediate fix, which need more data, and where additional testing will have the biggest impact on real-world safety.

Triggering Conditions for Fleet Speed Governance SOTIF Hazards

In practice, SOTIF problems don’t just appear out of thin air. They show up when specific combinations of environment, hardware, software, and driver behavior line up just right. Those combinations are what ISO 21448 calls triggering conditions, and ignoring them is where many projects go wrong.

A triggering condition is a concrete mix of environmental factors, system state, and user actions that exposes a performance limit or design insufficiency and can lead to an unsafe outcome. On the bench, everything looks fine. On a certain wet Tuesday night near a tunnel entrance, it doesn’t.

For fleet speed governance, triggering conditions tend to arise from interactions across:

- Sensors, including camera, GNSS, IMU, and V2X receivers

- Perception and fusion algorithms that decide which data to believe

- Control logic that chooses the speed limit, warnings, and interventions

- Driver behavior and predictable misuse patterns

- Environment and infrastructure, such as road layout, signage, and weather

Below are representative triggering conditions that fleets should describe, tag, and track over time instead of treating them as one-off anecdotes.

Sensor and Localization Triggering Conditions

- GPS loss or degradation: Tunnels, underground car parks, urban canyons, and high bridges can all disrupt GNSS. If your system leans too heavily on approximate position, it may latch onto the wrong speed zone or road segment when signal quality drops.

- GNSS/IMU drift: Long stretches without proper corrections can slowly push the perceived vehicle position sideways onto a parallel road, for example a service road with a much lower limit than the main highway, or vice versa.

- Camera occlusion or degradation: Dirt, salt, snow, heavy rain, bugs on the lens, or strong sun glare can dramatically reduce the accuracy of traffic sign recognition, and at night haloes from headlights or streetlights can confuse detection. If the system doesn’t recognize the degradation, it might hold onto a bad speed value for too long.

- Camera field-of-view limits: Signs tucked behind trucks, partially hidden by trees, or mounted at unusual heights can be missed completely or only picked up very late. That small timing error can flip a safe deceleration into a harsh braking event or even a missed slowdown.

Map and Data Triggering Conditions

- Map data staleness: Newly changed limits, re-zoned areas, temporary work zones, or brand-new roads often lag in map databases. The system may force drivers to follow limits that are too high, too low, or simply wrong for the current layout.

- Incorrect map matching: If the map matcher snaps the vehicle to the wrong road geometry, you can end up with the limit from a frontage road, off-ramp, or nearby side street instead of the main route the fleet is actually using.

- Variable speed limits: Digital gantries and weather-dependent limits often change faster than static map layers can be updated. If your system assumes static data in a dynamic context, it risks applying outdated numbers at the worst times.

V2X and Connectivity Triggering Conditions

- V2X message spoofing or corruption: Faulty or malicious roadside units can broadcast incorrect speed limits or zone information. If your logic trusts V2X more than other sources without proper checks, a single bad transmitter can affect a lot of vehicles.

- Partial or delayed over-the-air updates: If some vehicles receive updated rules or data and others do not, you can see inconsistent speed governance behavior across the same fleet in the same location, which complicates both safety analysis and driver expectations.

Driver Behavior and Misuse Triggering Conditions

- Repeated override of interventions: If drivers routinely push past limits or cancel warnings, the system’s internal assumptions about typical compliance fall apart. The result is a growing gap between what engineering thought would happen and what happens in daily operation.

- Unexpected control combinations: Drivers mix cruise control, limiters, hill descent control, and manual gear selection in creative ways. Light brake touches followed by throttle taps can create sequences that the designers never modeled, especially on grades or in heavy traffic.

- Incorrect configuration: Mis-set ODD parameters, wrong policy profiles, or sloppy cloning of settings between regions can effectively run the system outside its validated envelope, even though every ECU is technically “working as designed.”

Our companion article on digs into the ethical and policy angles of overrides and incentives. Here we’re just treating override behavior as a technical triggering condition that needs to be modeled and tested like any other input.

Building and Using a Triggering Condition Database

A serious SOTIF strategy doesn’t live in someone’s notebook. It relies on a structured triggering condition database that evolves over time. Each entry usually captures things like:

- Scenario description, including its mapping to SOTIF area 1–4.

- Which sensors, data sources, and software components are involved.

- Environmental context such as location, weather, lighting, traffic, and road type.

- Observed frequency in real fleet data, even if it’s low probability.

- Estimated severity and exposure for safety analysis.

- Existing mitigations plus proposed design or policy changes.

By maintaining this database and keeping it live, fleets can:

- Focus SOTIF verification and validation on scenarios that matter instead of scattering effort randomly.

- Refine ODD boundaries and configuration rules based on real evidence, not just design intent.

- Plan software and data updates so they target the most impactful hazards first.

Resolute Dynamics wires this database into our SOTIF verification validation framework. Field events feed into the database, which then shapes new simulation campaigns, which then feed back into design and OTA updates. That loop is what turns SOTIF from a one-time exercise into an operational discipline.

SOTIF Validation Strategies for Fleet-Scale Deployment

Validating SOTIF for speed governance is very different from proving that a single component passes a bench test. You’re trying to show that residual risk out on the road is driven down to an acceptable level compared to your baseline, whether that’s human-only driving or an older generation limiter.

In practice, a solid SOTIF validation framework for fleets stacks several layers on top of each other. Each layer catches different classes of problems and feeds information into the next.

1. Scenario-Based Simulation and Bench Testing

Scenario-based testing is where most SOTIF work should start. You’re not just checking basic functionality. You’re deliberately bending the environment and inputs to see where the intended function struggles.

- Use capable simulation tools to build realistic roads, signage, traffic, and weather. Include the nasty bits, not just the brochure-grade scenes.

- Inject sensor performance limitations like noise, occlusion, delays, and mis-calibration to see how perception and control respond.

- Systematically vary triggering conditions. For example, tweak distances to signs, map vs camera disagreements, V2X reliability, and timing between detection and intervention.

- Apply Monte Carlo or combinatorial methods so you cover wide parameter ranges you’d never manage just driving one test truck around.

Simulation is even more powerful when you bring in hardware-in-the-loop (HIL) or software-in-the-loop (SIL). You feed real ECUs or software containers with synthetic sensor streams and watch where assumptions crack. Spotting those issues here is a lot cheaper than discovering them from crash reports.

2. Controlled Fleet Piloting (Limited ODD)

Once the simulation results look stable, you move into the real world. But you don’t throw it across the entire fleet on day one. You start with controlled pilots.

- Pick a limited set of vehicles and define a tight ODD: specific regions, road classes, operating hours, and maybe even weather bands.

- Equip those vehicles with richer logging of sensor data, intermediate decisions, and driver actions so you can replay what happened later.

- Watch for unexpected interventions, overrides, or driver complaints that point to SOTIF gaps rather than just user preference.

- Feed what you learn back into your triggering condition models so simulation keeps getting closer to reality.

At this stage, your SOTIF area classification should be a living artifact. As you see real scenarios, some move from Area 4 to Area 1, some from Area 3 into Area 2 once you realize they’re unsafe. Newly discovered Area 2 scenarios drive redesign and more detailed testing.

3. Field Operational Testing at Scale

Once pilots are stable and your known issues are managed, you can expand to field operational testing across a larger slice of the fleet and a broader ODD. This is where you start to get statistically meaningful evidence instead of just anecdotes.

- Log critical events such as sharp decelerations, abrupt speed limit changes, frequent overrides, and any divergence from expected behavior.

- Deploy on-vehicle or backend event detectors that automatically flag patterns that look like SOTIF issues instead of combing through raw logs by hand.

- Run regular SOTIF data mining sessions where safety engineers and data scientists cluster events, correlate them with environment, and update your risk picture.

- Review high-severity or unclear cases with experienced engineers, not just automated scoring scripts.

This is where the scale of a fleet becomes powerful. A few hundred vehicles running for months will hit weird edge cases that a single development car might never see. That’s exactly the kind of coverage SOTIF needs.

4. Post-Deployment Continuous Monitoring

SOTIF work doesn’t stop when the speed governance product reaches SOP or the first big deployment. Once the system is live across the fleet, you need a permanent field monitoring SOTIF capability.

- Define clear KPIs and leading indicators, such as unexpected overrides per 10,000 km, time spent above safe target speeds, or variance from expected intervention patterns.

- Use backend analytics to pick up drifts in behavior. For example, new infrastructure, signage changes, or regulatory shifts can slowly push you into new scenario space.

- Put in place a robust process for over-the-air (OTA) updates, including pre-deployment validation, staged rollouts, and post-update monitoring tied to SOTIF metrics.

Regulators are increasingly expecting this kind of lifecycle approach. The mindset is similar to UNECE WP.29 cybersecurity and software update rules and the UNECE WP.29 ALKS regulation for lane-keeping. Even if the text does not always call SOTIF out by name, the expectation is that you manage how the function behaves in real use over time, not just at type approval.

5. Acceptance Criteria and Residual Risk for Fleet Speed Governance

SOTIF puts a lot of emphasis on acceptance criteria for residual risk. You have to be clear about what risk you’re accepting, how often remaining hazards might occur, and why you think that’s reasonable. For speed governance, those criteria typically involve:

- Comparing crash rates, near-miss frequency, and speeding incidents with and without the system, or against a legacy system.

- Bounding how often known triggers can still lead to unsafe behavior after mitigations are in place.

- Showing that, even with limitations, the system reduces risk versus current practice by a meaningful margin.

A few practical tips that people often skip in the rush to sign off:

- Calibrate against a human baseline: Look at scenarios where humans already struggle, such as complex junctions or high-variability limits. If your speed governance system delivers a clear reduction in human errors there, that supports a stronger argument for accepting some residual risk.

- Account for fleet heterogeneity: Braking performance and safe speed margins aren’t the same for a half-loaded van and a fully loaded articulated truck. Your acceptance criteria should reflect actual braking capability and use cases, not just posted limits.

- Record decision trade-offs: If you choose to err on the side of slower speeds in some locations and that impacts delivery times, document the reasoning. Those trade-offs matter when talking to management, drivers, regulators, and insurers.

Resolute Dynamics embeds these acceptance steps in our SOTIF verification validation framework. Both engineering and operations teams participate in sign-off, so residual risk is understood and owned instead of being assumed away.

How Resolute Dynamics Addresses SOTIF in Speed Governance

Resolute Dynamics has built its speed governance stack with SOTIF in mind from the start. That means we don’t just bolt on a few tests. We shape the architecture, algorithms, and fleet processes around the way ISO 21448 expects systems to behave and evolve over time.

Resolute Dynamics applies ISO 21448 SOTIF principles directly to fleet speed governance programs across different vehicle classes and regions. The details vary per customer, but the core building blocks stay consistent.

Sensor Fusion to Reduce Single-Point Performance Limitations

Our Capture module pulls together multiple data sources instead of trusting any single one blindly:

- Camera-based speed sign recognition for real-time visual cues.

- GNSS + IMU-based localization to provide position and vehicle dynamics.

- HD map speed limit data for broader context and look-ahead capability.

- V2X messages where infrastructure supports it.

Through sensor fusion and confidence-weighted rules, Capture deliberately reduces SOTIF vulnerabilities such as:

- Relying on a dirty, misaligned, or partially blinded camera long after its output has become unreliable.

- Overriding a clear camera reading with stale or low-confidence map data.

- Accepting unverified V2X messages as truth without checking them against other information channels.

Fusion behavior is tightly coupled to ODD definitions. For example, in downtown zones where GNSS is notoriously noisy, the system can lower trust in map-based matching and lean more on robust visual cues, or shift to conservative default limits when both data sources look shaky. Our related article on goes into how we manage calibration and data accuracy so that SOTIF performance stays solid over the whole lifecycle.

Triggering Condition Database from Large-Scale Fleet Data

Resolute Dynamics maintains a living triggering condition database based on anonymized telemetry from 200K+ vehicles running in many different environments. That scale lets us see patterns that a single fleet would never catch on its own.

- We can spot new Area 3 unknown unsafe scenarios quickly as they appear across multiple fleets or regions.

- We can compare how often certain hazards occur in different environments, such as ports, city centers, or rural routes.

- We can share summarized lessons across customers without exposing sensitive operational data.

Every new triggering condition that shows up in the field goes through the same loop:

- Engineers replay it in simulation to unpack exactly how the failure unfolded.

- It gets a SOTIF area classification, usually landing in Area 2 or Area 3 to start with.

- The condition then drives updates, ranging from algorithm tuning and new ODD guardrails to changes in driver messaging and training material.

Because this loop is continuous, SOTIF coverage improves as mileage grows. The system is not frozen at launch quality. It matures with every new data batch.

Continuous Monitoring Pipeline and SOTIF in Production

To line up with SOTIF expectations and the spirit of UNECE WP.29 on software updates, Resolute Dynamics runs a monitoring and analytics pipeline for customers in production.

- We collect event-level data for anomalies such as unexpected limit jumps, repeated driver overrides in the same spots, and intervention patterns that don’t match the intended design.

- We use a mix of machine learning and rule-based detectors to highlight events that look like SOTIF issues, instead of flooding teams with every minor quirk.

- We route curated events into our SOTIF verification validation framework so that they feed the next round of analysis and updates.

This monitoring framework supports several operational needs:

- OTA updates that are backed by SOTIF evidence, rather than just delivering new features or map tiles.

- Customer-specific residual risk dashboards that allow safety managers to see how the system is performing and where effort is paying off.

- Consistent reporting for regulators and insurers, helping demonstrate real-world safety improvements and proactive risk management.

Graduated Intervention Designed for SOTIF Safety

The way speed interventions are staged and escalated has a big impact on SOTIF. A technically correct limit applied in a surprising or aggressive way can still be unsafe. Our work on graduated intervention strategies is tightly linked to SOTIF assumptions and validation results.

Details on intervention patterns (advice → warning → soft limit → hard limit) and tuning are covered on another page. For the SOTIF angle, see: .

From a SOTIF perspective, our focus is on:

- How drivers actually respond to each intervention level, based on field data instead of wishful thinking.

- When interventions risk creating surprise, distraction, or frustration that might lead to new hazards.

- How override mechanisms interact with the safety case, including how often and in what context we allow overrides before escalating or logging alerts.

The result is a speed governance setup that’s not only functional in a lab sense, but also SOTIF-compliant in day-to-day fleet operation.

Common SOTIF Mistakes in Fleet Speed Governance (and How to Fix Them)

Mistake 1: Treating ISO 26262 Compliance as Sufficient

Problem: A lot of teams assume that if the ECU is ISO 26262-certified and they’ve done basic on-road tests, their speed governance solution is automatically safe.

Why it’s risky: Functional safety won’t catch misread environments, fuzzy ODD definitions, or gaps in the scenario catalog. Most real-world speed governance issues show up with fault-free hardware and software.

Fix: Stand up a separate SOTIF workstream aligned with ISO 21448. Give it its own hazard analysis, scenario catalog, and validation plan so SOTIF doesn’t get buried under generic functional safety work.

Mistake 2: Vague or Missing ODD Definition

Problem: Some fleets roll out their systems “everywhere” without any formal ODD, then act surprised when behavior in untested conditions looks rough.

Why it’s risky: You can’t make a solid SOTIF argument if you can’t say where the system is intended to operate and under what constraints.

Fix: Define the operational design domain explicitly. That means road classes, regions, weather conditions, time of day, speed ranges, and any operational constraints. Configure the system to respect those limits so it doesn’t quietly wander outside validated territory.

Mistake 3: Ignoring Foreseeable Misuse

Problem: Driver overrides, policy workarounds, and misconfigurations are often blamed on “bad drivers” or “non-compliant depots” and left out of the technical analysis.

Why it’s risky: ISO 21448 expects you to study foreseeable misuse. In fleets, business pressures can make override misuse very predictable. Treating it as someone else’s problem doesn’t fly in a SOTIF review.

Fix: Add override patterns and misuse behaviors to your triggering condition database. Use them in validation and feed them into driver training and policy design. For deeper ethical and policy discussion, see our piece on .

Mistake 4: Underestimating Sensor Calibration and Aging

Problem: Many programs assume sensors will keep working like new for years and treat calibration as a one-time manufacturing step.

Why it’s risky: Camera mounts loosen, lenses scratch, and vibration shifts alignments. Over time, those small drifts become new SOTIF hazards, especially for camera-heavy systems.

Fix: Build in sensor health monitoring and periodic calibration checks. Design algorithms to be tolerant to gradual degradation and to flag clearly when inputs drop below usable quality. See for more details on managing sensor lifecycle.

Mistake 5: No Structured Field Monitoring for SOTIF

Problem: Some fleets rely purely on driver complaints, serious incidents, or insurer feedback to learn about issues.

Why it’s risky: A lot of SOTIF problems show up as near-misses, confusing interventions, or minor anomalies that never turn into full crashes. If you don’t monitor for them, you miss early warning signs.

Fix: Set up field monitoring SOTIF with clear event signatures, such as repeated overrides at the same GPS coordinates, sharp decelerations after misapplied limits, or abnormal patterns in intervention timing. Feed those events into your SOTIF verification validation framework so they drive continuous improvement.

FAQ: SOTIF Compliance for Fleet Speed Governance

Is there a formal SOTIF certification for fleet speed governance systems?

ISO 21448 is a standard, not a certification in itself. That said, independent auditors, technical services, and some regulators are beginning to ask for evidence that your development process, validation activities, and field monitoring are aligned with SOTIF principles. A well-documented SOTIF approach can strengthen your position in regulator meetings, insurer negotiations, and big customer tenders.

What are fleet operators actually responsible for under SOTIF?

Even if the OEM or a vendor designed the speed governance system, fleet operators aren’t off the hook. They’re responsible for using it within its ODD, keeping sensors in decent shape, configuring policies correctly, and watching how the system behaves in service. If you knowingly run beyond validated regions or ignore recurring high-risk triggers in your operation, the responsibility is shared.

How expensive is SOTIF testing and validation compared to traditional testing?

SOTIF does add cost, mainly through scenario-based simulation, extra data analysis, and field monitoring. The upside is that these investments are reusable. Once you build a solid scenario library and data pipeline, adding more vehicles or regions usually has a much lower marginal cost. Many fleets find that the reduction in incidents, smoother regulatory dealings, and better insurance positions easily justify the upfront effort.

How does SOTIF relate to ISO 26262 in practice for speed governance?

Think of ISO 26262 as keeping the system controlled when faults occur and SOTIF as keeping a fault-free system from doing unsafe things. For speed governance, ISO 26262 covers hardware and software failures. ISO 21448 covers how the intended functionality handles complex environments and scenarios. In real safety cases, both standards are usually cited, with a clear split between failure-related and scenario-related hazards.

Does SOTIF apply to aftermarket fleet devices or only OEM systems?

SOTIF applies to any system whose intended functionality can introduce or mitigate safety hazards. That includes aftermarket devices, retrofit kits, and mobile-based solutions, not just OEM-integrated systems. Aftermarket solutions do face extra integration challenges, especially around sensor placement and power, but they still need to address sensor limitations, ODD boundaries, foreseeable misuse, and field monitoring.

Do we need to model SOTIF for advisory-only speed warning systems?

Yes, though the risk profile is different from hard limiters. Advisory-only systems can still create distraction, confusion, or over-trust if they show the wrong limits or behave inconsistently. SOTIF analysis should cover how drivers interpret and respond to warnings, not just whether a warning was technically correct.

How does SOTIF impact compliance with UNECE WP.29 or ALKS regulations?

UNECE WP.29 regulations on software updates and cybersecurity, and the UNECE WP.29 ALKS regulation, all emphasize lifecycle safety, clear ODD definitions, and sound update practices. While SOTIF isn’t always named outright, the expectations line up closely. Regulators want proof that you understand how your intended functions behave over time in the real world and that you have a process to correct issues as they appear.

Can simulation alone satisfy SOTIF validation for fleet speed governance?

No. Simulation is critical, but ISO 21448 expects a blend of analysis, simulation, prototype testing, and field monitoring. Real drivers, local infrastructure quirks, and regional practices are hard to model perfectly. You need field operational testing and ongoing monitoring to capture those effects and to keep your SOTIF case valid as conditions change.

Final Summary and Next Steps

SOTIF compliance for fleet speed governance is about far more than passing a lab test. It’s an ongoing, data-driven discipline that keeps your intelligent speed limiting, advisory, and intervention functions behaving safely in the mess of real-world conditions, including ones you haven’t thought of yet.

By:

- Understanding how ISO 26262 functional safety and ISO 21448 SOTIF differ and fit together,

- Using the four-area model to classify scenarios and prioritize your safety work,

- Managing triggering conditions and ODD boundaries as living artifacts, not static documents,

- Running a robust SOTIF verification validation framework that covers simulation, pilots, and broad field operation, and

- Building continuous monitoring so residual risk steadily declines instead of drifting upward,

fleets can significantly raise safety levels, improve regulatory readiness, and build real trust in automated speed governance.

Resolute Dynamics supports fleets across this whole path, from early ODD definition and scenario modeling to in-service monitoring and SOTIF-driven OTA updates. To see how SOTIF can be tailored to your vehicles, routes, and operational constraints, contact our team or explore our related resources:

Next step: Take your current speed governance functions and map their behavior into the SOTIF four-area model. Then list the top five triggering conditions you know exist but can’t yet quantify. That short list gives you the most practical starting point for a serious, actionable SOTIF roadmap.